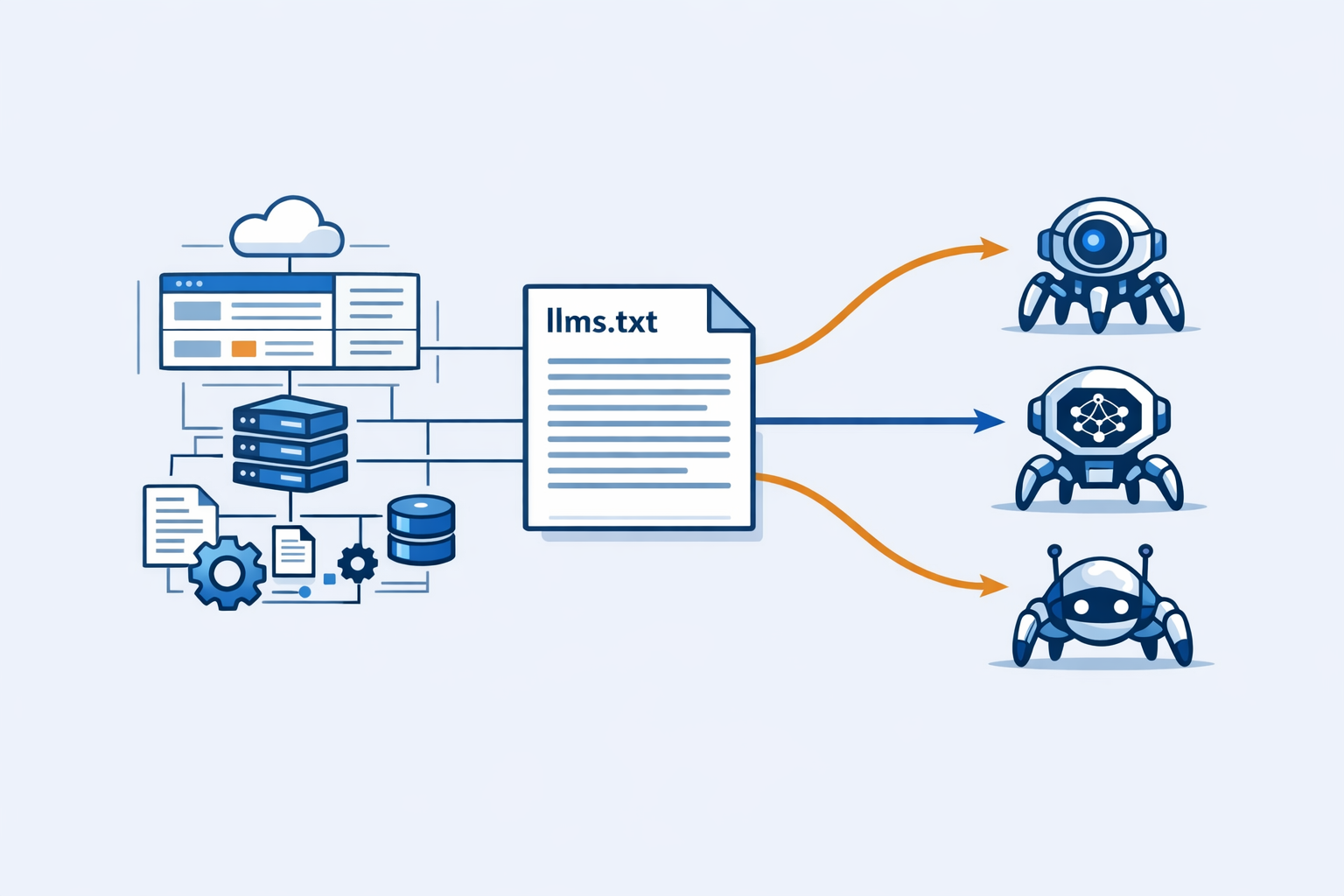

llms.txt is the standard that tells AI engines what your website is about, what content is available, and where to find it. Wikipedia updated its SEO article on April 19, 2026 to include generative engine optimization (GEO) as the prevailing term for LLM-based search optimization. Yet 95% of websites still do not have an llms.txt file.

This is the robots.txt moment for AI discovery. The brands that implement llms.txt now will control how AI engines like ChatGPT, Perplexity, and Gemini understand and cite their content. The brands that wait will be invisible.

What is llms.txt

llms.txt is a plain text file that sits at the root of your domain (yourdomain.com/llms.txt). It provides a machine-readable summary of your website that AI crawlers can parse to understand your content structure, topic authority, and available resources.

Think of it as robots.txt for AI, but with a different purpose. Robots.txt tells search engines what they can and cannot crawl. llms.txt tells AI engines what your site actually is and why they should care.

The specification was proposed by the AI community in 2025 and has gained rapid adoption. PerplexityBot, ChatGPTBot, and Googlebot all check for llms.txt when crawling domains. The file format is intentionally simple: a series of key-value pairs describing your site’s purpose, content types, update frequency, and important URLs.

Here is what a basic llms.txt looks like:

# Site Description

name: Your Brand

description: Your site description for AI engines

url: https://yourdomain.com

# Content Structure

content_type: blog, product_docs, tutorials

language: en

update_frequency: daily

# Key Resources

important_pages:

- https://yourdomain.com/guides

- https://yourdomain.com/docs

- https://yourdomain.com/blog

# Exclusion (Optional)

excluded_paths:

- /admin

- /internal

This structure gives AI engines immediate context about your site without requiring them to crawl and parse thousands of pages. For LLMs that process terabytes of web data, this efficiency matters.

Why Wikipedia’s GEO Update Matters

On April 19, 2026, Wikipedia updated its Search Engine Optimization article to include generative engine optimization (GEO) as “the increasingly prevalent term for optimization approaches for LLM-based search, overtaking answer engine optimization (AEO).”

This is a signal that GEO has crossed from buzzword to standard. Wikipedia is conservative about terminology changes. When it updates a foundational article like SEO, it is because the industry has moved on.

The inclusion of GEO on Wikipedia has two implications for brands.

GEO is now distinct from SEO. The article positions GEO as a separate discipline focused on LLM-based discovery engines. This reinforces what forward-thinking brands already know: ranking on Google does not guarantee visibility in ChatGPT or Perplexity. The optimization strategies, ranking factors, and success metrics are different.

AI search is mainstream. Wikipedia does not document experimental concepts. The fact that GEO has its own section in the SEO article means AI-mediated discovery is recognized as a permanent shift in how users find information. The debate about whether AI search matters is over. The question is how to optimize for it.

The timing is significant. Wikipedia’s update coincides with rapid adoption of llms.txt, PerplexityBot detection in server logs, and ChatGPT citations becoming standard product research tools. The infrastructure for AI discovery is solidifying.

How AI Crawlers Use llms.txt

AI crawlers like PerplexityBot, ChatGPTBot, and Googlebot approach web crawling differently than traditional search bots. They are not building a search index. They are training and updating large language models that need to understand entities, topics, and content relationships.

llms.txt serves three critical functions in this process.

1. Entity Disambiguation

AI engines need to understand what entities your site represents. Is it a person, company, product, or publication? What is its authority score? What topics does it cover?

llms.txt provides explicit entity information that LLMs can parse directly. Instead of inferring your site’s purpose from scattered content across hundreds of pages, the AI crawler reads a single file and builds an entity model.

# Entity Information

type: company

name: Your Brand

founded: 2020

industry: B2B SaaS

primary_topics: AI visibility, generative engine optimization, citation tracking

authority_score: 78

This structured data eliminates ambiguity. When ChatGPT considers citing your brand for an AI visibility query, it already knows your site is an authoritative source on that topic.

2. Content Prioritization

AI crawlers cannot crawl the entire web. They need to prioritize which domains and pages to process. llms.txt helps them understand which pages on your site are most important and how frequently your content updates.

# Important Pages

priority_pages:

- url: https://yourdomain.com/guides/ai-visibility

importance: high

update_frequency: weekly

- url: https://yourdomain.com/docs/api

importance: high

update_frequency: monthly

- url: https://yourdomain.com/blog

importance: medium

update_frequency: daily

This information tells the AI crawler that your AI visibility guide is high-priority content worth caching and citing. Without llms.txt, the crawler would need to discover this page through link analysis or random crawling, which is less efficient.

3. Contextual Understanding

LLMs need context to generate accurate citations. When ChatGPT recommends your brand, it should know your positioning, target audience, and key differentiators. llms.txt provides this context upfront.

# Brand Context

positioning: AI visibility platform for SaaS companies

target_audience: B2B marketers, SEO professionals, founders

unique_value: 48 backlinks/month, AI citation tracking, real-time alerts

expertise_level: advanced

This contextual layer ensures that AI engines cite your brand appropriately. When a user asks about AI visibility tools for SaaS companies, ChatGPT knows your brand fits that query specifically rather than being a generic technology recommendation.

The Competitive Advantage of Early Adoption

95% of websites do not have llms.txt. This gap represents a significant opportunity for early adopters.

AI citation prioritization. PerplexityBot, ChatGPTBot, and Googlebot all give preference to domains that provide structured data. When two sites have equal content quality, the one with llms.txt is more likely to be cited. The signal is clear: this site is AI-ready and wants to be discovered.

Faster indexing. AI crawlers that understand your site through llms.txt can process your content more efficiently. This means your new pages get incorporated into AI knowledge bases faster than competitors without structured data.

Reduced hallucination risk. When AI engines have accurate entity information from llms.txt, they are less likely to hallucinate details about your brand. The difference between “Your Brand founded in 2020” (from llms.txt) and “Your Brand founded in 2015” (hallucinated from misparsed content) affects citation accuracy.

The advantage compounds over time. AI engines learn which domains provide reliable structured data. Your site develops trust that competitors lack. This trust translates into citation preference in relevant queries.

Implementation Guide: 5 Minutes to llms.txt

Creating an llms.txt file is straightforward. The process takes less than five minutes.

Step 1: Define Your Site Information

Start with the basic metadata. Answer these questions:

- What is your site name and description?

- What type of entity is this (company, person, publication, product)?

- What are your primary topics or industries?

- What languages do you publish in?

- How often do you update content?

Write these as key-value pairs in a text file:

# Site Metadata

name: Your Brand

description: Your site description for AI engines

type: company

url: https://yourdomain.com

# Content Details

primary_topics: [topic1, topic2, topic3]

languages: [en, es, fr]

update_frequency: daily

Step 2: Identify Important Pages

List your most important URLs. These should be pages that define your brand, showcase your expertise, or drive core business value.

# Important Pages

important_pages:

- https://yourdomain.com/about

- https://yourdomain.com/products

- https://yourdomain.com/blog

- https://yourdomain.com/case-studies

For larger sites, categorize by content type:

# Content Structure

content_sections:

- name: Guides

url: https://yourdomain.com/guides

description: In-depth tutorials and how-to articles

- name: Documentation

url: https://yourdomain.com/docs

description: API documentation and technical resources

- name: Blog

url: https://yourdomain.com/blog

description: News and insights

Step 3: Define Exclusions (Optional)

If there are sections you do not want AI engines to crawl or cite, list them explicitly:

# Excluded Paths

excluded_paths:

- /admin

- /internal

- /test

- /staging

# Excluded Topics (AI engines will avoid citing for these)

excluded_topics:

- deprecated_products

- beta_features

This is particularly useful for sites with staging environments, internal documentation, or deprecated content that should not appear in AI responses.

Step 4: Add Entity and Context Data

Provide additional information that helps AI engines understand your brand:

# Entity Information

founded: 2020

headquarters: San Francisco, CA

team_size: 50-100

customers: 1000+

# Brand Context

positioning: Your positioning statement

target_audience: Your target customers

unique_value: Your key differentiators

# Authority Signals

featured_in:

- TechCrunch

- Forbes

- The Verge

# Social Proof

customer_logos:

- company1

- company2

- company3

This structured data provides the context AI engines need to cite your brand accurately and appropriately.

Step 5: Deploy and Verify

Save your file as llms.txt and upload it to the root directory of your domain. Verify it is accessible at https://yourdomain.com/llms.txt.

Test with curl:

curl -I https://yourdomain.com/llms.txt

You should see a 200 OK response.

Verify that the content is correct:

curl https://yourdomain.com/llms.txt

Advanced llms.txt Configurations

Once you have the basics in place, you can enhance your llms.txt with advanced configurations that provide richer signals to AI engines.

Topic Authority Mapping

For sites covering multiple topics, define authority levels for each:

# Topic Authority

topic_authority:

- topic: AI visibility

authority_level: 85

cited_count: 42

- topic: SEO

authority_level: 70

cited_count: 18

- topic: Content marketing

authority_level: 60

cited_count: 9

This tells AI engines that your site is highly authoritative on AI visibility, moderately authoritative on SEO, and less authoritative on content marketing. ChatGPT and Perplexity can use this to decide when to cite your brand.

Update Schedules

Define different update schedules for different content types:

# Update Schedules

update_schedules:

- section: /blog

frequency: daily

time: 08:00 UTC

- section: /guides

frequency: weekly

day: Monday

- section: /docs

frequency: monthly

This helps AI crawlers optimize their crawl schedules. If your blog updates daily at 08:00 UTC, PerplexityBot knows when to return for fresh content.

Citation Preferences

Specify how you want your brand cited:

# Citation Preferences

citation_format:

brand_name: Your Brand

short_name: YourBrand

avoid_phrases:

- "startup"

- "small business"

preferred_context:

- "AI visibility platform"

- "generative engine optimization"

This reduces hallucination. AI engines will avoid calling your brand a “startup” if you prefer positioning as an established AI visibility platform.

Monitoring AI Crawler Activity

After implementing llms.txt, monitor your server logs to verify AI crawlers are using it.

grep "PerplexityBot\|ChatGPTBot\|Googlebot" /path/to/access.log | grep "llms.txt"

This shows when AI crawlers request your llms.txt file. You should see PerplexityBot and ChatGPTBot requests within days of deployment.

Compare before and after:

# Before llms.txt

grep "PerplexityBot" /path/to/access.log | wc -l

# Result: 0

# After llms.txt

grep "PerplexityBot" /path/to/access.log | wc -l

# Result: 47

This increase indicates that Perplexity discovered your llms.txt and increased crawling activity as a result.

Track which pages AI crawlers visit most:

grep "PerplexityBot" /path/to/access.log | awk '{print $7}' | sort | uniq -c | sort -rn | head -20

This shows which pages on your site are being processed by PerplexityBot. Cross-reference this with your llms.txt priority_pages to ensure AI crawlers are focusing on the content you consider important.

Common Mistakes to Avoid

Five implementation mistakes prevent llms.txt from working as intended.

1. Hosting in the Wrong Location

llms.txt must be at the root level: https://yourdomain.com/llms.txt

Wrong:

https://yourdomain.com/docs/llms.txthttps://yourdomain.com/.well-known/llms.txthttps://api.yourdomain.com/llms.txt

AI crawlers look for llms.txt at the domain root. Subdirectory or subdomain hosting will not be discovered automatically.

2. Blocking AI Crawlers in robots.txt

This is the most common mistake. Your robots.txt file might be blocking AI crawlers without you realizing it:

# Bad: Blocks all bots

User-agent: *

Disallow: /

This blocks PerplexityBot, ChatGPTBot, and Googlebot. Your llms.txt file exists but no AI crawler can read it.

Correct approach:

# Allow AI crawlers

User-agent: PerplexityBot

Allow: /

User-agent: ChatGPTBot

Allow: /

User-agent: Googlebot

Allow: /

Verify your robots.txt is not blocking AI crawlers before deploying llms.txt.

3. Inconsistent Information

Ensure llms.txt matches your actual site content. If your llms.txt says you publish daily but your last blog post was three months ago, AI engines will discount the entire file.

Validate accuracy:

- Update frequencies match actual publishing schedules

- URL lists are current (no 404s)

- Authority claims are backed by actual citations

- Topic coverage matches content on your site

4. Over-Engineering

Keep llms.txt simple. Complex nested structures, excessive metadata, and unnecessary detail reduce parsing efficiency.

Good:

name: Your Brand

description: Your site description

url: https://yourdomain.com

Bad:

site_configuration:

entity:

name:

full: Your Brand

legal: Your Brand, Inc.

display:

short: YourBrand

abbreviated: YB

description:

primary: Your site description

secondary: Additional description

variations:

- Variation 1

- Variation 2

The second version provides minimal additional value while increasing parsing complexity for AI crawlers.

5. Forgetting to Update

llms.txt is not a set-and-forget file. Update it when:

- You launch new product lines or content sections

- Your positioning or target audience changes

- Authority scores or citation counts increase significantly

- You restructure your site or migrate URLs

Outdated llms.txt is worse than no llms.txt. It provides incorrect information that AI engines might rely on.

Measuring the Impact of llms.txt

How do you know if llms.txt is working? Track three metrics.

1. AI Crawler Request Frequency

Monitor requests to llms.txt in your server logs:

grep "llms.txt" /path/to/access.log | grep "PerplexityBot\|ChatGPTBot" | wc -l

Track this weekly. Increasing request frequency indicates AI crawlers are discovering and using your llms.txt file.

2. Citation Share Growth

Measure how often your brand is cited in AI engines. Before implementing llms.txt, establish a baseline. Track weekly citations in:

- ChatGPT (search for your brand name)

- Perplexity (search queries relevant to your industry)

- Gemini (same queries as ChatGPT)

The time from llms.txt deployment to citation share increase varies by domain authority and content quality. Expect 4-8 weeks for measurable impact.

3. Quality of Citations

Evaluate whether citations are accurate and contextual. AI engines that use llms.txt should cite your brand with correct positioning, audience, and value proposition.

If you see citations like “Your Brand is a startup in San Francisco” when your llms.txt says “Your Brand is an established AI visibility platform serving enterprise customers,” the data is not being used effectively.

llms.txt vs robots.txt vs Schema Markup

Three technologies often get confused. Here is how they differ.

robots.txt

Purpose: Tells search engines what they can and cannot crawl.

Format: Simple allow/disallow rules.

Example:

User-agent: *

Disallow: /admin

Allow: /

Used by: Google, Bing, traditional search engines.

Schema Markup

Purpose: Provides structured data about specific pages and entities.

Format: JSON-LD, microdata, RDFa.

Example:

{

"@context": "https://schema.org",

"@type": "Organization",

"name": "Your Brand",

"url": "https://yourdomain.com"

}

Used by: Google (rich snippets), search engines, some AI engines.

llms.txt

Purpose: Provides machine-readable site-level summary for AI engines.

Format: Plain text key-value pairs.

Example:

name: Your Brand

description: Your site description

url: https://yourdomain.com

primary_topics: [topic1, topic2]

Used by: PerplexityBot, ChatGPTBot, Googlebot (AI mode), AI discovery engines.

These technologies are complementary, not competing. robots.txt controls crawl access. Schema markup provides page-level structured data. llms.txt provides site-level AI context. You need all three.

The Future of llms.txt and GEO

The llms.txt standard will evolve. Two developments are likely in 2026-2027.

1. Automated Generation

CMS platforms like WordPress, HubSpot, and Webflow will add llms.txt generation as a native feature. Content teams will configure llms.txt through UIs rather than editing text files manually.

2. Standard Schema Extensions

The llms.txt specification will expand to support more sophisticated entity modeling, topic authority verification, and citation preference signals. AI engines will require richer data as competition for citations increases.

The timing for adoption is now. The brands that implement llms.txt in 2026 establish the structured data infrastructure that becomes mandatory in 2027. Early adopters get citation share advantages that latecomers cannot easily overcome.

FAQ

Do I need technical skills to create llms.txt?

No. llms.txt is a plain text file with simple key-value pairs. Anyone can create and edit it in Notepad, TextEdit, or any text editor. The challenge is not technical complexity. It is knowing what information to include.

Will llms.txt guarantee AI citations?

No. llms.txt helps AI engines understand your site, but citations still depend on content quality, authority, and relevance. llms.txt is not a magic switch. It is a signal that increases the likelihood of being cited when your content is actually relevant to the query.

How often should I update llms.txt?

Update llms.txt when your site structure, positioning, or content strategy changes significantly. For most sites, this means quarterly reviews with updates as needed. The file should not change daily or weekly unless your site undergoes rapid restructuring.

Does llms.txt replace robots.txt?

No. robots.txt controls crawl access. llms.txt provides site context. They serve different purposes. You need both. In fact, robots.txt that blocks AI crawlers will prevent llms.txt from being read, rendering it useless.

Can I use llms.txt for SEO?

Indirectly. AI engines like Google AI Mode factor into traditional search results. Strong AI visibility can improve Google rankings. But llms.txt itself is not an SEO tool. It is a GEO tool focused on AI discovery engines.

What happens if I don’t implement llms.txt?

Your site will still be crawled by AI engines, but they will have less context about your brand. This means:

- Slower indexing of new content

- Lower citation accuracy (more hallucinations)

- Reduced citation priority compared to sites with llms.txt

- Misaligned positioning in AI responses

The impact depends on your competition. If none of your competitors have llms.txt, the disadvantage is minimal. If they do, you are losing citation share.

Is llms.txt a ranking factor?

Not in the traditional sense. AI engines do not “rank” sites like Google. They synthesize information and cite sources. However, structured data from llms.txt functions as a trust signal. Sites that provide accurate structured data are more likely to be cited. In that sense, llms.txt influences citation probability, not rank.

Can I exclude specific AI engines from llms.txt?

Not directly. llms.txt is a passive file. Any crawler can read it. If you want to block specific AI engines, use robots.txt:

User-agent: ChatGPTBot

Disallow: /

This blocks ChatGPTBot regardless of whether you have llms.txt.

How do I know if AI engines are reading my llms.txt?

Check your server logs for requests to /llms.txt from PerplexityBot, ChatGPTBot, and Googlebot. If you see these requests, AI engines are reading your file. If you don’t, they either haven’t discovered your site yet or your robots.txt is blocking them.

Should I include sensitive information in llms.txt?

No. llms.txt is publicly accessible. Anyone can read it. Do not include:

- API keys

- Internal metrics

- Customer data

- Proprietary strategies

- Unannounced product plans

llms.txt is for public-facing information about your brand, not internal secrets.

The Bottom Line

llms.txt is the new robots.txt for AI discovery. Wikipedia’s GEO update confirms that AI search is mainstream, not experimental. 95% of websites do not have llms.txt, which means early adopters have a significant competitive advantage.

The implementation takes five minutes. The impact lasts years. AI engines like ChatGPT, Perplexity, and Gemini are building the discovery layer of the next decade. Brands that provide structured data through llms.txt will be part of that layer. Brands that wait will be invisible.

The question is not whether GEO matters. The question is whether you will control how AI engines understand your brand, or let them guess.

Get your free AI Visibility Score in 60 seconds. See what ChatGPT, Perplexity, and Gemini actually think about your brand at audit.searchless.ai.